Subsections of Designs

| Design document | |

|---|---|

| Revision | v1 |

| Status | proposed |

Add qcow tool to allow VDI import/export

Introduction

At XCP-ng, we are working on overcoming the 2TiB limitation for VM disks while preserving essential features such as snapshots, copy-on-write capabilities, and live migration.

To achieve this, we are introducing Qcow2 support in SMAPI and the blktap driver. With the alpha release, we can: - Create a VDI - Snapshot it - Export and import it to/from XVA - Perform full backups

However, we currently cannot export a VDI to a Qcow2 file, nor import one.

The purpose of this design proposal is to outline a solution for implementing VDI import/export in Qcow2 format.

Design Proposal

The import and export of VHD-based VDIs currently rely on vhd-tool, which is responsible for streaming data between a VDI and a file. It supports both Raw and VHD formats, but not Qcow2.

There is an existing tool called qcow-tool originally packaged by MirageOS. It is no longer actively maintained, but it can produce Qcow files readable by QEMU.

Currently, qcow-tool does not support streaming, but we propose to add this capability. This means replicating the approach used in vhd-tool, where data is pushed to a socket.

We have contacted the original developer, David Scott, and there are no objections to us maintaining the tool if needed.

Therefore, the most appropriate way to enable Qcow2 import/export in XAPI is to

add streaming support to qcow-tool.

XenAPI changes

The workflow

- The export and import of VDIs are handled by the XAPI HTTP server:

GET /export_raw_vdiPUT /import_raw_vdi

- The corresponding handlers are

Export_raw_vdi.handlerandImport_raw_vdi.handler. - Since the format is checked in the handler, we need to add support for

Qcow2, as currently onlyRaw,Tar, andVhdare supported. - This requires adding a new type in the

Importexport.Formatmodule and a new content type:application/x-qemu-disk. See mime-types format. - This allows the format to be properly decoded. Currently, all formats use a

wrapper called

Vhd_tool_wrapper, which sets up parameters forvhd-tool. We need to add a new wrapper for the Qcow2 format, which will instead useqcow-tool, a tool that we will package (see the section below). - The new wrapper will be responsible for setting up parameters (source, destination, etc.). Since it only manages Qcow2 files, we don’t need to pass additional format information.

- The format (

qcow2) will be specified in the URI. For example:/import_raw_vdi?session_id=<OpaqueRef>&task_id=<OpaqueRef>&vdi=<OpaqueRef>&format=qcow2

Adding and modifying qcow-tool

We need to package qcow-tool.

This new tool will be called from

ocaml/xapi/qcow_tool_wrapper.ml, as described in the previous section.To export a VDI to a Qcow2 file, we need to add functionality similar to

Vhd_tool_wrapper.send, which callsvhd-tool stream.- It writes data from the source to a destination. Unlike

vhd-tool, which supports multiple destinations, we will only support Qcow2 files. - Here is a typicall call to

vhd-tool stream

- It writes data from the source to a destination. Unlike

/bin/vhd-tool stream \

--source-protocol none \

--source-format hybrid \

--source /dev/sm/backend/ff1b27b1-3c35-972e-76ec-a56fe9f25e36/87711319-2b05-41a3-8ee0-3b63a2fc7035:/dev/VG_XenStorage-ff1b27b1-3c35-972e-76ec-a56fe9f25e36/VHD-87711319-2b05-41a3-8ee0-3b63a2fc7035 \

--destination-protocol none \

--destination-format vhd \

--destination-fd 2585f988-7374-8131-5b66-77bbc239cbb2 \

--tar-filename-prefix \

--progress \

--machine \

--direct \

--path /dev/mapper:.- To import a VDI from a Qcow2 file, we need to implement functionality similar

to

Vhd_tool_wrapper.receive, which callsvhd-tool serve.- This is the reverse of the export process. As with export, we will only support a single type of import: from a Qcow2 file.

- Here is a typical call to

vhd-tool serve

/bin/vhd-tool serve \

--source-format raw \

--source-protocol none \

--source-fd 3451d7ed-9078-8b01-95bf-293d3bc53e7a \

--tar-filename-prefix \

--destination file:///dev/sm/backend/f939be89-5b9f-c7c7-e1e8-30c419ee5de6/4868ac1d-8321-4826-b058-952d37a29b82 \

--destination-format raw \

--progress \

--machine \

--direct \

--destination-size 180405760 \

--prezeroed- We don’t need to propose different protocol and different format. As we will

not support different formats we just to handle data copy from socket into file

and from file to socket. Sockets and files will be managed into the

qcow_tool_wrapper. Theforkhelpers.mlmanages the list of file descriptors and we will mimic what the vhd tool wrapper does to link a UUID to socket.

| Design document | |

|---|---|

| Revision | v3 |

| Status | proposed |

Add supported image formats in sm-list

Introduction

At XCP-ng, we are enhancing support for QCOW2 images in SMAPI. The primary motivation for this change is to overcome the 2TB size limitation imposed by the VHD format. By adding support for QCOW2, a Storage Repository (SR) will be able to host disks in VHD and/or QCOW2 formats, depending on the SR type. In the future, additional formats—such as VHDx—could also be supported.

We need a mechanism to expose to end users which image formats are supported by a given SR. The proposal is to extend the SM API object with a new field that clients (such as XenCenter, XenOrchestra, etc.) can use to determine the available formats.

Design Proposal

To expose the available image formats to clients (e.g., XenCenter, XenOrchestra, etc.),

we propose adding a new field called supported_image_formats to the Storage Manager

(SM) module. This field will be included in the output of the SM.get_all_records call.

- With this new information, listing all parameters of the SM object will return:

# xe sm-list params=allOutput of the command will look like (notice that CLI uses hyphens):

uuid ( RO) : c6ae9a43-fff6-e482-42a9-8c3f8c533e36

name-label ( RO) : Local EXT3 VHD

name-description ( RO) : SR plugin representing disks as VHD files stored on a local EXT3 filesystem, created inside an LVM volume

type ( RO) : ext

vendor ( RO) : Citrix Systems Inc

copyright ( RO) : (C) 2008 Citrix Systems Inc

required-api-version ( RO) : 1.0

capabilities ( RO) [DEPRECATED] : SR_PROBE; SR_SUPPORTS_LOCAL_CACHING; SR_UPDATE; THIN_PROVISIONING; VDI_ACTIVATE; VDI_ATTACH; VDI_CLONE; VDI_CONFIG_CBT; VDI_CREATE; VDI_DEACTIVATE; VDI_DELETE; VDI_DETACH; VDI_GENERATE_CONFIG; VDI_MIRROR; VDI_READ_CACHING; VDI_RESET_ON_BOOT; VDI_RESIZE; VDI_SNAPSHOT; VDI_UPDATE

features (MRO) : SR_PROBE: 1; SR_SUPPORTS_LOCAL_CACHING: 1; SR_UPDATE: 1; THIN_PROVISIONING: 1; VDI_ACTIVATE: 1; VDI_ATTACH: 1; VDI_CLONE: 1; VDI_CONFIG_CBT: 1; VDI_CREATE: 1; VDI_DEACTIVATE: 1; VDI_DELETE: 1; VDI_DETACH: 1; VDI_GENERATE_CONFIG: 1; VDI_MIRROR: 1; VDI_READ_CACHING: 1; VDI_RESET_ON_BOOT: 2; VDI_RESIZE: 1; VDI_SNAPSHOT: 1; VDI_UPDATE: 1

configuration ( RO) : device: local device path (required) (e.g. /dev/sda3)

driver-filename ( RO) : /opt/xensource/sm/EXTSR

required-cluster-stack ( RO) :

supported-image-formats ( RO) : vhd, raw, qcow2Implementation details

The supported_image_formats field will be populated by retrieving information

from the SMAPI drivers. Specifically, each driver will update its DRIVER_INFO

dictionary with a new key, supported_image_formats, which will contain a list

of strings representing the supported image formats

(for example: ["vhd", "raw", "qcow2"]). Although the formats are listed as a

list of strings, they are treated as a set-specifying the same format multiple

times has no effect.

Driver behavior without supported_image_formats

If a driver does not provide this information (as is currently the case with existing drivers), the default value will be an empty list. This signifies that the driver determines which format to use when creating VDI. During a migration, the destination driver will choose the format of the VDI if none is explicitly specified. This ensures backward compatibility with both current and future drivers.

Specifying image formats for VDIs creation

If the supported image format is exposed to the client, then, when creating new VDI,

user can specify the desired format via the sm_config parameter image-format=qcow2 (or

any format that is supported). If no format is specified, the driver will use its

preferred default format. If the specified format is not supported, an error will be

generated indicating that the SR does not support it. Here is how it can be achieved

using the XE CLI:

# xe vdi-create \

sr-uuid=cbe2851e-9f9b-f310-9bca-254c1cf3edd8 \

name-label="A new VDI" \

virtual-size=10240 \

sm-config:image-format=vhdSpecifying image formats for VDIs migration

When migrating a VDI, an API client may need to specify the desired image format if the destination SR supports multiple storage formats.

VDI pool migrate

To support this, a new parameter, dest_img_format, is introduced to

VDI.pool_migrate. This field accepts a string specifying the desired format (e.g., qcow2),

ensuring that the VDI is migrated in the correct format. The new signature of

VDI.pool_migrate will be

VDI ref pool_migrate (session ref, VDI ref, SR ref, string, (string -> string) map).

If the specified format is not supported or cannot be used (e.g., due to size limitations),

an error will be generated. Validation will be performed as early as possible to prevent

disruptions during migration. These checks can be performed by examining the XAPI database

to determine whether the SR provided as the destination has a corresponding SM object with

the expected format. If this is not the case, a format not found error will be returned.

If no format is specified by the client, the destination driver will determine the appropriate

format.

# xe vdi-pool-migrate \

uuid=<VDI_UUID> \

sr-uuid=<SR_UUID> \

dest-img-format=qcow2VM migration to remote host

A VDI migration can also occur during a VM migration. In this case, we need to

be able to specify the expected destination format as well. Unlike VDI.pool_migrate,

which applies to a single VDI, VM migration may involve multiple VDIs.

The current signature of VM.migrate_send is (session ref, VM ref, (string -> string) map, bool, (VDI ref -> SR ref) map, (VIF ref -> network ref) map, (string -> string) map, (VGPU ref -> GPU_group ref) map). Thus there is already a parameter that maps each source

VDI to its destination SR. We propose to add a new parameter that allows specifying the

desired destination format for a given source VDI: (VDI ref -> string). It is

similar to the VDI-to-SR mapping. We will update the XE cli to support this new format.

It would be image_format:<source-vdi-uuid>=<destination-image-format>:

# xe vm-migrate \

host-uuid=<HOST_UUID> \

remote-master=<IP> \

remote-password=<PASS> \

remote-username=<USER> \

vdi:<VDI1_UUID>=<SR1_DEST_UUID> \

vdi:<VDI2_UUID>=<SR2_DEST_UUID> \

image-format:<VDI1_UUID>=vhd \

image-format:<VDI2_UUID>=qcow2 \

uuid=<VM_UUID>The destination image format would be a string such as vhd, qcow2, or another

supported format. It is optional to specify a format. If omitted, the driver

managing the destination SR will determine the appropriate format.

As with VDI pool migration, if this parameter is not supported by the SM driver,

a format not found error will be returned. The validation must happen before

sending a creation message to the SM driver, ideally at the same time as checking

whether all VDIs can be migrated.

To be able to check the format, we will need to modify VM.assert_can_migrate and

add the mapping from VDI references to their image formats, as is done in VM.migrate_send.

Impact

It should have no impact on existing storage repositories that do not provide any information about the supported image format.

This change impacts the SM data model, and as such, the XAPI database version will be incremented. It also impacts the API.

- Data Model:

- A new field (

supported_image_formats) is added to the SM records. - A new parameter is added to

VM.migrate_send:(VDI ref -> string) map - A new parameter is added to

VM.assert_can_migrate:(VDI ref -> string) map - A new parameter is added to

VDI.pool_migrate:string

- A new field (

- Client Awareness: Clients like the

xeCLI will now be able to query and display the supported image formats for a given SR. - Database Versioning: The XAPI database version will be updated to reflect this change.

| Design document | |

|---|---|

| Revision | v3 |

| Status | proposed |

| Review | #144 |

| Revision history | |

| v1 | Initial version |

| v2 | Included some open questions under Xapi point 2 |

| v3 | Added new error, task, and assumptions |

Aggregated Local Storage and Host Reboots

Introduction

When hosts use an aggregated local storage SR, then disks are going to be mirrored to several different hosts in the pool (RAID). This ensures that if a host goes down (e.g. due to a reboot after installing a hotfix or upgrade, or when “fenced” by the HA feature), all disk contents in the SR are still accessible. This also means that if all disks are mirrored to just two hosts (worst-case scenario), just one host may be down at any point in time to keep the SR fully available.

When a node comes back up after a reboot, it will resynchronise all its disks with the related mirrors on the other hosts in the pool. This syncing takes some time, and only after this is done, we may consider the host “up” again, and allow another host to be shut down.

Therefore, when installing a hotfix to a pool that uses aggregated local storage, or doing a rolling pool upgrade, we need to make sure that we do hosts one-by-one, and we wait for the storage syncing to finish before doing the next.

This design aims to provide guidance and protection around this by blocking hosts to be shut down or rebooted from the XenAPI except when safe, and setting the host.allowed_operations field accordingly.

XenAPI

If an aggregated local storage SR is in use, and one of the hosts is rebooting or down (for whatever reason), or resynchronising its storage, the operations reboot and shutdown will be removed from the host.allowed_operations field of all hosts in the pool that have a PBD for the SR.

This is a conservative approach in that assumes that this kind of SR tolerates only one node “failure”, and assumes no knowledge about how the SR distributes its mirrors. We may refine this in future, in order to allow some hosts to be down simultaneously.

The presence of the reboot operation in host.allowed_operations indicates whether the host.reboot XenAPI call is allowed or not (similarly for shutdown and host.shutdown). It will not, of course, prevent anyone from rebooting a host from the dom0 console or power switch.

Clients, such as XenCenter, can use host.allowed_operations, when applying an update to a pool, to guide them when it is safe to update and reboot the next host in the sequence.

In case host.reboot or host.shutdown is called while the storage is busy resyncing mirrors, the call will fail with a new error MIRROR_REBUILD_IN_PROGRESS.

Xapi

Xapi needs to be able to:

- Determine whether aggregated local storage is in use; this just means that a PBD for such an SR present.

- TBD: To avoid SR-specific code in xapi, the storage backend should tell us whether it is an aggregated local storage SR.

- Determine whether the storage system is resynchronising its mirrors; it will need to be able to query the storage backend for this kind of information.

- Xapi will poll for this and will reflect that a resync is happening by creating a

Taskfor it (in the DB). This task can be used to track progress, if available. - The exact way to get the syncing information from the storage backend is SR specific. The check may be implemented in a separate script or binary that xapi calls from the polling thread. Ideally this would be integrated with the storage backend.

- Xapi will poll for this and will reflect that a resync is happening by creating a

- Update

host.allowed_operationsfor all hosts in the pool according to the rules described above. This comes down to updating the functionvalid_operationsinxapi_host_helpers.ml, and will need to use a combination of the functionality from the two points above, plus and indication of host liveness fromhost_metrics.live. - Trigger an update of the allowed operations when a host shuts down or reboots (due to a XenAPI call or otherwise), and when it has finished resynchronising when back up. Triggers must be in the following places (some may already be present, but are listed for completeness, and to confirm this):

- Wherever

host_metrics.liveis updated to detect pool slaves going up and down (probably at least inDb_gc.check_host_livenessandXapi_ha). - Immediately when a

host.rebootorhost.shutdowncall is executed:Message_forwarding.Host.{reboot,shutdown,with_host_operation}. - When a storage resync is starting or finishing.

- Wherever

All of the above runs on the pool master (= SR master) only.

Assumptions

The above will be safe if the storage cluster is equal to the XenServer pool. In general, however, it may be desirable to have a storage cluster that is larger than the pool, have multiple XS pools on a single cluster, or even share the cluster with other kinds of nodes.

To ensure that the storage is “safe” in these scenarios, xapi needs to be able to ask the storage backend:

- if a mirror is being rebuilt “somewhere” in the cluster, AND

- if “some node” in the cluster is offline (even if the node is not in the XS pool).

If the cluster is equal to the pool, then xapi can do point 2 without asking the storage backend, which will simplify things. For the moment, we assume that the storage cluster is equal to the XS pool, to avoid making things too complicated (while still need to keep in mind that we may change this in future).

| Design document | |

|---|---|

| Revision | v1 |

| Status | confirmed |

Backtrace support

We want to make debugging easier by recording exception backtraces which are

- reliable

- cross-process (e.g. xapi to xenopsd)

- cross-language

- cross-host (e.g. master to slave)

We therefore need

- to ensure that backtraces are captured in our OCaml and python code

- a marshalling format for backtraces

- conventions for storing and retrieving backtraces

Backtraces in OCaml

OCaml has fast exceptions which can be used for both

- control flow i.e. fast jumps from inner scopes to outer scopes

- reporting errors to users (e.g. the toplevel or an API user)

To keep the exceptions fast, exceptions and backtraces are decoupled: there is a single active backtrace per-thread at any one time. If you have caught an exception and then throw another exception, the backtrace buffer will be reinitialised, destroying your previous records. For example consider a ‘finally’ function:

let finally f cleanup =

try

let result = f () in

cleanup ();

result

with e ->

cleanup ();

raise e (* <-- backtrace starts here now *)This function performs some action (i.e. f ()) and guarantees to

perform some cleanup action (cleanup ()) whether or not an exception

is thrown. This is a common pattern to ensure resources are freed (e.g.

closing a socket or file descriptor). Unfortunately the raise e in

the exception handler loses the backtrace context: when the exception

gets to the toplevel, Printexc.get_backtrace () will point at the

finally rather than the real cause of the error.

We will use a variant of the solution proposed by

Jacques-Henri Jourdan

where we will record backtraces when we catch exceptions, before the

buffer is reinitialised. Our finally function will now look like this:

let finally f cleanup =

try

let result = f () in

cleanup ();

result

with e ->

Backtrace.is_important e;

cleanup ();

raise eThe function Backtrace.is_important e associates the exception e

with the current backtrace before it gets deleted.

Xapi always has high-level exception handlers or other wrappers around all the

threads it spawns. In particular Xapi tries really hard to associate threads

with active tasks, so it can prefix all log lines with a task id. This helps

admins see the related log lines even when there is lots of concurrent activity.

Xapi also tries very hard to label other threads with names for the same reason

(e.g. db_gc). Every thread should end up being wrapped in with_thread_named

which allows us to catch exceptions and log stacktraces from Backtrace.get

on the way out.

OCaml design guidelines

Making nice backtraces requires us to think when we write our exception raising and handling code. In particular:

- If a function handles an exception and re-raise it, you must call

Backtrace.is_important ewith the exception to capture the backtrace first. - If a function raises a different exception (e.g.

Not_foundbecoming a XenAPIINTERNAL_ERROR) then you must useBacktrace.reraise <old> <new>to ensure the backtrace is preserved. - All exceptions should be printable – if the generic printer doesn’t do a good enough job then register a custom printer.

- If you are the last person who will see an exception (because you aren’t going

to rethrow it) then you may log the backtrace via

Debug.log_backtrace eif and only if you reasonably expect the resulting backtrace to be helpful and not spammy. - If you aren’t the last person who will see an exception (because you are going to rethrow it or another exception), then do not log the backtrace; the next handler will do that.

- All threads should have a final exception handler at the outermost level

for example

Debug.with_thread_namedwill do this for you.

Backtraces in python

Python exceptions behave similarly to the OCaml ones: if you raise a new exception while handling an exception, the backtrace buffer is overwritten. Therefore the same considerations apply.

Python design guidelines

The function sys.exc_info() can be used to capture the traceback associated with the last exception. We must guarantee to call this before constructing another exception. In particular, this does not work:

raise MyException(sys.exc_info())Instead you must capture the traceback first:

exc_info = sys.exc_info()

raise MyException(exc_info)Marshalling backtraces

We need to be able to take an exception thrown from python code, gather the backtrace, transmit it to an OCaml program (e.g. xenopsd) and glue it onto the end of the OCaml backtrace. We will use a simple json marshalling format for the raw backtrace data consisting of

- a string summary of the error (e.g. an exception name)

- a list of filenames

- a corresponding list of lines

(Note we don’t use the more natural list of pairs as this confuses the “rpclib” code generating library)

In python:

results = {

"error": str(s[1]),

"files": files,

"lines": lines,

}

print json.dumps(results)In OCaml:

type error = {

error: string;

files: string list;

lines: int list;

} with rpc

print_string (Jsonrpc.to_string (rpc_of_error ...))Retrieving backtraces

Backtraces will be written to syslog as usual. However it will also be possible to retrieve the information via the CLI to allow diagnostic tools to be written more easily.

The CLI

We add a global CLI argument “–trace” which requests the backtrace be printed, if one is available:

# xe vm-start vm=hvm --trace

Error code: SR_BACKEND_FAILURE_202

Error parameters: , General backend error [opterr=exceptions must be old-style classes or derived from BaseException, not str],

Raised Server_error(SR_BACKEND_FAILURE_202, [ ; General backend error [opterr=exceptions must be old-style classes or derived from BaseException, not str]; ])

Backtrace:

0/50 EXT @ st30 Raised at file /opt/xensource/sm/SRCommand.py, line 110

1/50 EXT @ st30 Called from file /opt/xensource/sm/SRCommand.py, line 159

2/50 EXT @ st30 Called from file /opt/xensource/sm/SRCommand.py, line 263

3/50 EXT @ st30 Called from file /opt/xensource/sm/blktap2.py, line 1486

4/50 EXT @ st30 Called from file /opt/xensource/sm/blktap2.py, line 83

5/50 EXT @ st30 Called from file /opt/xensource/sm/blktap2.py, line 1519

6/50 EXT @ st30 Called from file /opt/xensource/sm/blktap2.py, line 1567

7/50 EXT @ st30 Called from file /opt/xensource/sm/blktap2.py, line 1065

8/50 EXT @ st30 Called from file /opt/xensource/sm/EXTSR.py, line 221

9/50 xenopsd-xc @ st30 Raised by primitive operation at file "lib/storage.ml", line 32, characters 3-26

10/50 xenopsd-xc @ st30 Called from file "lib/task_server.ml", line 176, characters 15-19

11/50 xenopsd-xc @ st30 Raised at file "lib/task_server.ml", line 184, characters 8-9

12/50 xenopsd-xc @ st30 Called from file "lib/storage.ml", line 57, characters 1-156

13/50 xenopsd-xc @ st30 Called from file "xc/xenops_server_xen.ml", line 254, characters 15-63

14/50 xenopsd-xc @ st30 Called from file "xc/xenops_server_xen.ml", line 1643, characters 15-76

15/50 xenopsd-xc @ st30 Called from file "lib/xenctrl.ml", line 127, characters 13-17

16/50 xenopsd-xc @ st30 Re-raised at file "lib/xenctrl.ml", line 127, characters 56-59

17/50 xenopsd-xc @ st30 Called from file "lib/xenops_server.ml", line 937, characters 3-54

18/50 xenopsd-xc @ st30 Called from file "lib/xenops_server.ml", line 1103, characters 4-71

19/50 xenopsd-xc @ st30 Called from file "list.ml", line 84, characters 24-34

20/50 xenopsd-xc @ st30 Called from file "lib/xenops_server.ml", line 1098, characters 2-367

21/50 xenopsd-xc @ st30 Called from file "lib/xenops_server.ml", line 1203, characters 3-46

22/50 xenopsd-xc @ st30 Called from file "lib/xenops_server.ml", line 1441, characters 3-9

23/50 xenopsd-xc @ st30 Raised at file "lib/xenops_server.ml", line 1452, characters 9-10

24/50 xenopsd-xc @ st30 Called from file "lib/xenops_server.ml", line 1458, characters 48-60

25/50 xenopsd-xc @ st30 Called from file "lib/task_server.ml", line 151, characters 15-26

26/50 xapi @ st30 Raised at file "xapi_xenops.ml", line 1719, characters 11-14

27/50 xapi @ st30 Called from file "lib/pervasiveext.ml", line 22, characters 3-9

28/50 xapi @ st30 Raised at file "xapi_xenops.ml", line 2005, characters 13-14

29/50 xapi @ st30 Called from file "lib/pervasiveext.ml", line 22, characters 3-9

30/50 xapi @ st30 Raised at file "xapi_xenops.ml", line 1785, characters 15-16

31/50 xapi @ st30 Called from file "message_forwarding.ml", line 233, characters 25-44

32/50 xapi @ st30 Called from file "message_forwarding.ml", line 915, characters 15-67

33/50 xapi @ st30 Called from file "lib/pervasiveext.ml", line 22, characters 3-9

34/50 xapi @ st30 Raised at file "lib/pervasiveext.ml", line 26, characters 9-12

35/50 xapi @ st30 Called from file "message_forwarding.ml", line 1205, characters 21-199

36/50 xapi @ st30 Called from file "lib/pervasiveext.ml", line 22, characters 3-9

37/50 xapi @ st30 Raised at file "lib/pervasiveext.ml", line 26, characters 9-12

38/50 xapi @ st30 Called from file "lib/pervasiveext.ml", line 22, characters 3-9

9/50 xapi @ st30 Raised at file "rbac.ml", line 236, characters 10-15

40/50 xapi @ st30 Called from file "server_helpers.ml", line 75, characters 11-41

41/50 xapi @ st30 Raised at file "cli_util.ml", line 78, characters 9-12

42/50 xapi @ st30 Called from file "lib/pervasiveext.ml", line 22, characters 3-9

43/50 xapi @ st30 Raised at file "lib/pervasiveext.ml", line 26, characters 9-12

44/50 xapi @ st30 Called from file "cli_operations.ml", line 1889, characters 2-6

45/50 xapi @ st30 Re-raised at file "cli_operations.ml", line 1898, characters 10-11

46/50 xapi @ st30 Called from file "cli_operations.ml", line 1821, characters 14-18

47/50 xapi @ st30 Called from file "cli_operations.ml", line 2109, characters 7-526

48/50 xapi @ st30 Called from file "xapi_cli.ml", line 113, characters 18-56

49/50 xapi @ st30 Called from file "lib/pervasiveext.ml", line 22, characters 3-9One can automatically set “–trace” for a whole shell session as follows:

export XE_EXTRA_ARGS="--trace"The XenAPI

We already store error information in the XenAPI “Task” object and so we can store backtraces at the same time. We shall add a field “backtrace” which will have type “string” but which will contain s-expression encoded backtrace data. Clients should not attempt to parse this string: its contents may change in future. The reason it is different from the json mentioned before is that it also contains host and process information supplied by Xapi, and may be extended in future to contain other diagnostic information.

The Xenopsd API

We already store error information in the xenopsd API “Task” objects, we can extend these to store the backtrace in an additional field (“backtrace”). This field will have type “string” but will contain s-expression encoded backtrace data.

The SMAPIv1 API

Errors in SMAPIv1 are returned as XMLRPC “Faults” containing a code and

a status line. Xapi transforms these into XenAPI exceptions usually of the

form SR_BACKEND_FAILURE_<code>. We can extend the SM backends to use the

XenAPI exception type directly: i.e. to marshal exceptions as dictionaries:

results = {

"Status": "Failure",

"ErrorDescription": [ code, param1, ..., paramN ]

}We can then define a new backtrace-carrying error:

- code =

SR_BACKEND_FAILURE_WITH_BACKTRACE - param1 = json-encoded backtrace

- param2 = code

- param3 = reason

which is internally transformed into SR_BACKEND_FAILURE_<code> and

the backtrace is appended to the current Task backtrace. From the client’s

point of view the final exception should look the same, but Xapi will have

a chance to see and log the whole backtrace.

As a side-effect, it is possible for SM plugins to throw XenAPI errors directly, without interpretation by Xapi.

| Design document | |

|---|---|

| Revision | v2 |

| Status | confirmed |

Better VM revert

Overview

XenAPI allows users to rollback the state of a VM to a previous state, which is

stored in a snapshot, using the call VM.revert. Because there is no

VDI.revert call, VM.revert uses VDI.clone on the snapshot to duplicate

the contents of that disk and then use the new clone as the storage for the VM.

Because VDI.clone creates new VDI refs and uuids, some problematic

behaviours arise:

- Clients such as Apache CloudStack need to include complex logic to keep track of the disks they are actively managing

- Because the snapshot is cloned and the original vdi is deleted, VDI

references to the VDI become invalid, like

VDI.snapshot_of. This means that the database has to be combed through to change these references. Because the database doesn’t support transactions this operation is not atomic and can produce inconsistent database states.

Additionally, some filesystems support snapshots natively, doing the clone procedure is much costlier than allowing the filesystem to do the revert.

We will fix these problems by:

- introducing the new feature

VDI_REVERTin SM interface (xapi_smint). This allows backends to advertise that they support the new functionality - defining a new storage operation

VDI.revertin storage_interface, which is gated by the featureVDI_REVERT - proxying the storage operation to SMAPIv3 and SMAPv1 backends accordingly

- adding

VDI.revertto xapi_vdi which will call the storage operation if the backend advertises it, and fallback to the previous method that usesVDI.cloneif it doesn’t advertise it, or issues are detected at runtime that prevent it - changing the Xapi implementation of

VM.revertto useVDI.revert - implement

vdi_revertfor common storage types, including File and LVM-based SRs - adding unit and quick tests to xapi to test that

VM.revertdoes not regress

Current VM.revert behaviour

The code that reverts the state of storage is located in update_vifs_vbds_vgpus_and_vusbs. The steps it does is:

- destroys the VM’s VBDs (both disks and CDs)

- destroys the VM’s VDI (disks only), referenced by the snapshot’s VDIs using

snapshot_of; as well as the suspend VDI. - clones the snapshot’s VDIs (disks and CDs), if one clone fails none remain.

- searches the database for all

snapshot_ofreferences to the deleted VDIs and replaces them with the referenced of the newly cloned snapshots. - clones the snapshot’s resume VDI

- creates copies of all the cloned VBDs and associates them with the cloned VDIs

- assigns the new resume VDI to the VM

XenAPI design

API

The function VDI.revert will be added, with arguments:

- in:

snapshot: Ref(VDI): the snapshot to which we want to revert - in:

driver_params: Map(String,String): optional extra parameters - out:

Ref(VDI)reference to the new VDI with the reverted contents

The function will extract the reference of VDI whose contents need to be

replaced. This is the snapshot’s snapshot_of field, then it will call the

storage function function VDI.revert to have its contents replaced with the

snapshot’s. The VDI object will not be modified, and the reference returned is

the VDI’s original reference.

If anything impedes the successful finish of an in-place revert, like the SM

backend does not advertising the feature VDI_REVERT, not implement the

feature, or the snapshot_of reference is invalid; an exception will be

raised.

Xapi Storage

The function VDI.revert is added, with the following arguments:

- in:

dbg: the task identifier, useful for tracing - in:

sr: SR where the new VDI must be created - in:

snapshot_info: metadata of the snapshot, the contents of which must be made available in the VDI indicated by thesnapshot_offield

SMAPIv1

The function vdi_revert is defined with the following arguments:

- in:

sr_uuid: the UUID of the SR containing both the VDI and the snapshot - in:

vdi_uuid: the UUID of the snapshot whose contents must be duplicated - in:

target_uuid: the UUID of the target whose contents must be replaced

The function will replace the contents of the target_uuid VDI with the

contents of the vdi_uuid VDI without changing the identify of the target

(i.e. name-label, uuid and location are guaranteed to remain the same).

The vdi_uuid is preserved by this operation. The operation is obvoiusly

idempotent.

SMAPIv3

In an analogous way to SMAPIv1, the function Volume.revert is defined with the

following arguments:

- in:

dbg: the task identifier, useful for tracing - in:

sr: the UUID of the SR containing both the VDI and the snapshot - in:

snapshot: the UUID of the snapshot whose contents must be duplicated - in:

vdi: the UUID of the VDI whose contents must be replaced

Xapi

- add the capability

VDI_REVERTso backends can advertise it - use

VDI.revertin theVM.revertafter the VDIs have been destroyed, and before the snapshot’s VDIs have been cloned. If any of the reverts fail because aNot_implementedexception is thrown, or thesnapshot_ofcontains an invalid reference, add the affected VDIs to the list to be cloned and recovered, using the existing method - expose a new

xe vdi-revertCLI command

SM changes

We will modify

- SRCommand.py and VDI.py to add a new

vdi_revertfunction which throws a ’not implemented’ exception - FileSR.py to implement

VDI.revertusing a variant of the existing snapshot/clone machinery - EXTSR.py and NFSSR.py to advertise the

VDI_REVERTcapability - LVHDSR.py to implement

VDI.revertusing a variant of the existing snapshot/clone machinery - LVHDoISCSISR.py and LVHDoHBASR.py to advertise the

VDI_REVERTcapability

Prototype code from the previous proposal

Prototype code exists here:

- xapi-project/xcp-idl#37 by @johnelse

- xapi-project/xen-api#2058 mainly by @johnelse but with 2 extra patches from me

- Definition of SMAPIv1 vdi_revert

- Hacky implementation for EXT/NFS

| Design document | |

|---|---|

| Revision | v1 |

| Status | released (6.0) |

Bonding Improvements design

This document describes design details for the PR-1006 requirements.

XAPI and XenAPI

Creating a Bond

Current Behaviour on Bond creation

Steps for a user to create a bond:

- Shutdown all VMs with VIFs using the interfaces that will be bonded, in order to unplug those VIFs.

- Create a Network to be used by the bond:

Network.create - Call

Bond.createwith a ref to this Network, a list of refs of slave PIFs, and a MAC address to use. - Call

PIF.reconfigure_ipto configure the bond master. - Call

Host.management_reconfigureif one of the slaves is the management interface. This command will callinterface-reconfigureto bring up the master and bring down the slave PIFs, thereby activating the bond. Otherwise, callPIF.plugto activate the bond.

Bond.create XenAPI call:

Remove duplicates in the list of slaves.

Validate the following:

- Slaves must not be in a bond already.

- Slaves must not be VLAN masters.

- Slaves must be on the same host.

- Network does not already have a PIF on the same host as the slaves.

- The given MAC is valid.

Create the master PIF object.

- The device name of this PIF is

bondx, with x the smallest unused non-negative integer. - The MAC of the first-named slave is used if no MAC was specified.

- The device name of this PIF is

Create the Bond object, specifying a reference to the master. The value of the

PIF.master_offield on the master is dynamically computed on request.Set the

PIF.bond_slave_offields of the slaves. The value of theBond.slavesfield is dynamically computed on request.

New Behaviour on Bond creation

Steps for a user to create a bond:

- Create a Network to be used by the bond:

Network.create - Call

Bond.createwith a ref to this Network, a list of refs of slave PIFs, and a MAC address to use.

The new bond will automatically be plugged if one of the slaves was plugged.

In the following, for a host h, a VIF-to-move is a VIF associated with a VM that is either

- running, suspended or paused on h, OR

- halted, and h is the only host that the VM can be started on.

The Bond.create XenAPI call is updated to do the following:

Remove duplicates in the list of slaves.

Validate the following, and raise an exception if any of these check fails:

- Slaves must not be in a bond already.

- Slaves must not be VLAN masters.

- Slaves must not be Tunnel access PIFs.

- Slaves must be on the same host.

- Network does not already have a PIF on the same host as the slaves.

- The given MAC is valid.

Try unplugging all currently attached VIFs of the set of VIFs that need to be moved. Roll back and raise an exception of one of the VIFs cannot be unplugged (e.g. due to the absence of PV drivers in the VM).

Determine the primary slave: the management PIF (if among the slaves), or the first slave with IP configuration.

Create the master PIF object.

- The device name of this PIF is

bondx, with x the smallest unused non-negative integer. - The MAC of the primary slave is used if no MAC was specified.

- Include the IP configuration of the primary slave.

- If any of the slaves has

PIF.disallow_unplug = true, this will be copied to the master.

- The device name of this PIF is

Create the Bond object, specifying a reference to the master. The value of the

PIF.master_offield on the master is dynamically computed on request. Also a reference to the primary slave is written toBond.primary_slaveon the new Bond object.Set the

PIF.bond_slave_offields of the slaves. The value of theBond.slavesfield is dynamically computed on request.Move VLANs, plus the VIFs-to-move on them, to the master.

- If all VLANs on the slaves have different tags, all VLANs will be moved to the bond master, while the same Network is used. The network effectively moves up to the bond and therefore no VIFs need to be moved.

- If multiple VLANs on different slaves have the same tag, they necessarily have different Networks as well. Only one VLAN with this tag is created on the bond master. All VIFs-to-move on the remaining VLAN networks are moved to the Network that was moved up.

Move Tunnels to the master. The tunnel Networks move up with the tunnels. As tunnel keys are different for all tunnel networks, there are no complications as in the VLAN case.

Move VIFs-to-move on the slaves to the master.

If one of the slaves is the current management interface, move management to the master; the master will automatically be plugged. If none of the slaves is the management interface, plug the master if any of the slaves was plugged. In both cases, the slaves will automatically be unplugged.

On all slaves, reset the IP configuration and set

disallow_unplugto false.

Note: “moving” a VIF, VLAN or tunnel means “re-creating somewhere else, and destroying the old one”.

Destroying a Bond

Current Behaviour on Bond destruction

Steps for a user to destroy a bond:

- If the management interface is on the bond, move it to another PIF

using

PIF.reconfigure_ipandHost.management_reconfigure. Otherwise, noPIF.unplugneeds to be called on the bond master, asBond.destroydoes this automatically. - Call

Bond.destroywith a ref to the Bond object. - If desired, bring up the former slave PIFs by calls to

PIF.plug(this is does not happen automatically).

Bond.destroy XenAPI call:

Validate the following constraints:

- No VLANs are attached to the bond master.

- The bond master is not the management PIF.

Bring down the master PIF and clean up the underlying network devices.

Remove the Bond and master PIF objects.

New Behaviour on Bond destruction

Steps for a user to destroy a bond:

- Call

Bond.destroywith a ref to the Bond object. - If desired, move VIFs/VLANs/tunnels/management from (former) primary slave to other PIFs.

Bond.destroy XenAPI call is updated to do the following:

- Try unplugging all currently attached VIFs of the set of VIFs that need to be moved. Roll back and raise an exception of one of the VIFs cannot be unplugged (e.g. due to the absence of PV drivers in the VM).

- Copy the IP configuration of the master to the primary slave.

- Move VLANs, with their Networks, to the primary slave.

- Move Tunnels, with their Networks, to the primary slave.

- Move VIFs-to-move on the master to the primary slave.

- If the master is the current management interface, move management to the primary slave. The primary slave will automatically be plugged.

- If the master was plugged, plug the primary slave. This will automatically clean up the underlying devices of the bond.

- If the master has

PIF.disallow_unplug = true, this will be copied to the primary slave. - Remove the Bond and master PIF objects.

Using Bond Slaves

Current Behaviour for Bond Slaves

- It possible to plug any existing PIF, even bond slaves. Any other PIFs that cannot be attached at the same time as the PIF that is being plugged, are automatically unplugged.

- Similarly, it is possible to make a bond slave the management interface. Any other PIFs that cannot be attached at the same time as the PIF that is being plugged, are automatically unplugged.

- It is possible to have a VIF on a Network associated with a bond slave. When the VIF’s VM is started, or the VIF is hot-plugged, the PIF is relies on is automatically plugged, and any other PIFs that cannot be attached at the same time as this PIF are automatically unplugged.

- It is possible to have a VLAN on a bond slave, though the bond (master) and the VLAN may not be simultaneously attached. This is not currently enforced (which may be considered a bug).

New behaviour for Bond Slaves

- It is no longer possible to plug a bond slave. The exception CANNOT_PLUG_BOND_SLAVE is raised when trying to do so.

- It is no longer possible to make a bond slave the management interface. The exception CANNOT_PLUG_BOND_SLAVE is raised when trying to do so.

- It is still possible to have a VIF on the Network of a bond slave. However, it is not possible to start such a VIF’s VM on a host, if this would need a bond slave to be plugged. Trying this will result in a CANNOT_PLUG_BOND_SLAVE exception. Likewise, it is not possible to hot-plug such a VIF.

- It is no longer possible to place a VLAN on a bond slave. The exception CANNOT_ADD_VLAN_TO_BOND_SLAVE is raised when trying to do so.

- It is no longer possible to place a tunnel on a bond slave. The exception CANNOT_ADD_TUNNEL_TO_BOND_SLAVE is raised when trying to do so.

Actions on Start-up

Current Behaviour on Start-up

When a pool slave starts up, bonds and VLANs on the pool master are replicated on the slave:

- Create all VLANs that the master has, but the slave has not. VLANs are identified by their tag, the device name of the slave PIF, and the Networks of the master and slave PIFs.

- Create all bonds that the master has, but the slave has not. If the interfaces needed for the bond are not all available on the slave, a partial bond is created. If some of these interface are already bonded on the slave, this bond is destroyed first.

New Behaviour on Start-up

- The current VLAN/tunnel/bond recreation code is retained, as it uses the new Bond.create and Bond.destroy functions, and therefore does what it needs to do.

- Before VLAN/tunnel/bond recreation, any violations of the rules defined in R2 are rectified, by moving VIFs, VLANs, tunnels or management up to bonds.

CLI

The behaviour of the xe CLI commands bond-create, bond-destroy,

pif-plug, and host-management-reconfigure is changed to match their

associated XenAPI calls.

XenCenter

XenCenter already automatically moves the management interface when a

bond is created or destroyed. This is no longer necessary, as the

Bond.create/destroy calls already do this. XenCenter only needs to

copy any PIF.other_config keys that is needs between primary slave and

bond master.

Manual Tests

- Create a bond of two interfaces…

- without VIFs/VLANs/management on them;

- with management on one of them;

- with a VLAN on one of them;

- with two VLANs on two different interfaces, having the same VLAN tag;

- with a VIF associated with a halted VM on one of them;

- with a VIF associated with a running VM (with and without PV drivers) on one of them.

- Destroy a bond of two interfaces…

- without VIFs/VLANs/management on it;

- with management on it;

- with a VLAN on it;

- with a VIF associated with a halted VM on it;

- with a VIF associated with a running VM (with and without PV drivers) on it.

- In a pool of two hosts, having VIFs/VLANs/management on the interfaces of the pool slave, create a bond on the pool master, and restart XAPI on the slave.

- Restart XAPI on a host with a networking configuration that has become illegal due to these requirements.

| Design document | |

|---|---|

| Revision | v2 |

| Status | proposed |

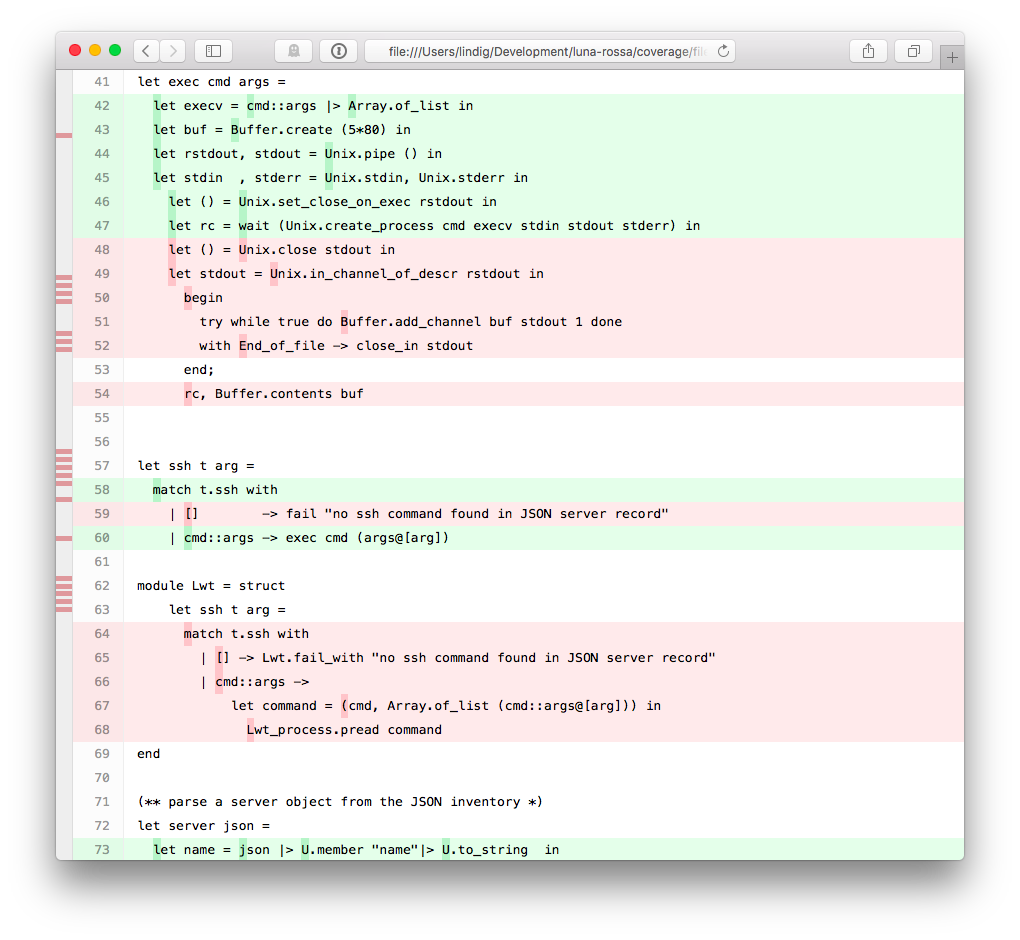

Code Coverage Profiling

We would like to add optional coverage profiling to existing OCaml projects in the context of XenServer and XenAPI. This article presents how we do it.

Binaries instrumented for coverage profiling in the XenServer project need to run in an environment where several services act together as they provide operating-system-level services. This makes it a little harder than profiling code that can be profiled and executed in isolation.

TL;DR

To build binaries with coverage profiling, do:

./configure --enable-coverage

make

Binaries will log coverage data to /tmp/bisect*.out from which a

coverage report can be generated in coverage/:

bisect-ppx-report -I _build -html coverage /tmp/bisect*.out

Profiling Framework Bisect-PPX

The open-source BisectPPX instrumentation framework uses extension

points (PPX) in the OCaml compiler to instrument code during

compilation. Instrumented code for a binary is then compiled as usual

and logs during execution data to in-memory data structures. Before an

instrumented binary terminates, it writes the logged data to a file.

This data can then be analysed with the bisect-ppx-report tool, to

produce a summary of annotated code that highlights what part of a

codebase was executed.

BisectPPX has several desirable properties:

- a robust code base that is well tested

- it is easy to integrate into the compilation pipeline (see below)

- is specific to the OCaml language; an expression-oriented language like OCaml doesn’t fit the traditional statement coverage well

- it is actively maintained

- is generates useful reports for interactive and non-interactive use that help to improve code coverage

Red parts indicate code that wasn’t executed whereas green parts were. Hovering over a dark green spot reveals how often that point was executed.

The individual steps of instrumenting code with BisectPPX are greatly abstracted by OCamlfind (OCaml’s library manager) and OCamlbuild (OCaml’s compilation manager):

# write code

vim example.ml

# build it with instrumentation from bisect_ppx

ocamlbuild -use-ocamlfind -pkg bisect_ppx -pkg unix example.native

# execute it - generates files ./bisect*.out

./example.native

# generate report

bisect-ppx-report -I _build -html coverage bisect000*

# view coverage/index.html

Summary:

- 'binding' points: 2/2 (100.00%)

- 'sequence' points: 10/10 (100.00%)

- 'match/function' points: 5/8 (62.50%)

- total: 17/20 (85.00%)

The fourth step generates a HTML report in coverage/. All it takes is

to declare to OCamlbuild that a module depends on bisect_ppx and it

will be instrumented during compilation. Behind the scenes ocamlfind

makes sure that the compiler uses a preprocessing step that instruments

the code.

Signal Handling

During execution the code instrumentation leads to the collection of

data. This code registers a function with at_exit that writes the data

to bisect*.out when exit is called. A binary can terminate without

calling exit and in that case the file would not be written. It is

therefore important to make sure that exit is called. If this does not

happen naturally, for example in the context of a daemon that is

terminated by receiving the TERM signal, a signal handler must be

installed:

let stop signal =

printf "caught signal %a\n" Debug.Pp.signal signal;

exit 0

Sys.set_signal Sys.sigterm (Sys.Signal_handle stop)

Dumping coverage information at runtime

By default coverage data can only be dumped at exit, which is inconvenient if you have a test-suite that needs to reuse a long running daemon, and starting/stopping it each time is not feasible.

In such cases we need an API to dump coverage at runtime, which is provided by bisect_ppx >= 1.3.0.

However each daemon will need to set up a way to listen to an event that triggers this coverage dump,

furthermore it is desirable to make runtime coverage dumping compiled in conditionally to be absolutely sure

that production builds do not use coverage preprocessed code.

Hence instead of duplicating all this build logic in each daemon (xapi, xenopsd, etc.) provide this

functionality in a common library xapi-idl that:

- logs a message on startup so we know it is active

- sets BISECT_FILE environment variable to dump coverage in the appropriate place

- listens on

org.xen.xapi.coverage.<name>message queue for runtime coverage dump commands:- sending

dump <Number>will cause runtime coverage to be dumped to a file namedbisect-<name>-<random>.<Number>.out - sending

resetwill cause the runtime coverage counters to be reset

- sending

Daemons that use Xcp_service.configure2 (e.g. xenopsd) will benefit from this runtime trigger automatically,

provided they are themselves preprocessed with bisect_ppx.

Since we are interested in collecting coverage data for system-wide test-suite runs we need a way to trigger

dumping of coverage data centrally, and a good candidate for that is xapi as the top-level daemon.

It will call Xcp_coverage.dispatcher_init (), which listens on org.xen.xapi.coverage.dispatch and

dispatches the coverage dump command to all message queues under org.xen.xapi.coverage.* except itself.

On production, and regular builds all of this is a no-op, ensured by using separate lib/coverage/disabled.ml and lib/coverage/enabled.ml

files which implement the same interface, and choosing which one to use at build time.

Where Data is Written

By default, BisectPPX writes data in a binary’s current working

directory as bisectXXXX.out. It doesn’t overwrite existing files and

files from several runs can be combined during analysis. However, this

name and the location can be inconvenient when multiple programs share a

directory.

BisectPPX’s default can be overridden with the BISECT_FILE

environment variable. This can happen on the command line:

BISECT_FILE=/tmp/example ./example.native

In the context of XenServer we could do this in startup scripts. However, we added a bit of code

val Coverage.init: string -> unit

that sets the environment variable from inside the program. The files

are written to a temporary directory (respecting $TMP or using /tmp)

and uses the string-typed argument to include it in the name. To be

effective, this function must be called before the programs exits. For

clarity it is called at the begin of program execution.

Instrumenting an Oasis Project

While instrumentation is easy on the level of a small file or project it

is challenging in a bigger project. We decided to focus on projects that

are build with the Oasis build and packaging manager. These have a

well-defined structure and compilation process that is controlled by a

central _oasis file. This file describes for each library and binary

its dependencies at a package level. From this, Oasis generates a

configure script and compilation rules for the OCamlbuild system.

Oasis is designed that the generated files can be shipped without

requiring Oasis itself being available.

Goals for instrumentation are:

- what files are instrumented should be obvious and easy to manage

- instrumentation must be optional, yet easy to activate

- avoid methods that require to keep several files in sync like multiple

_oasisfiles - avoid separate Git branches for instrumented and non-instrumented code

In the ideal case, we could introduce a configuration switch

./configure --enable-coverage that would prepare compilation for

coverage instrumentation. While Oasis supports the creation of such

switches, they cannot be used to control build dependencies like

compiling a file with or without package bisec_ppx. We have chosen a

different method:

A Makefile target coverage augments the _tags file to include the

rules in file _tags.coverage that cause files to be instrumented:

make coverage # prepare

make # build

leads to the execution of this code during preparation:

coverage: _tags _tags.coverage

test ! -f _tags.orig && mv _tags _tags.orig || true

cat _tags.coverage _tags.orig > _tags

The file _tags.coverage contains two simple OCamlbuild rules that

could be tweaked to instrument only some files:

<**/*.ml{,i,y}>: pkg_bisect_ppx

<**/*.native>: pkg_bisect_ppx

When make coverage is not called, these rules are not active and

hence, code is not instrumented for coverage. We believe that this

solution to control instrumentation meets the goals from above. In

particular, what files are instrumented and when is controlled by very

few lines of declarative code that lives in the main repository of a

project.

Project Layout

The crucial files in an Oasis-controlled project that is set up for coverage analysis are:

./_oasis - make "profiling" a build depdency

./_tags.coverage - what files get instrumented

./profiling/coverage.ml - support file, sets env var

./Makefile - target 'coverage'

The _oasis file bundles the files under profiling/ into an internal

library which executables then depend on:

# Support files for profiling

Library profiling

CompiledObject: best

Path: profiling

Install: false

Findlibname: profiling

Modules: Coverage

BuildDepends:

Executable set_domain_uuid

CompiledObject: best

Path: tools

ByteOpt: -warn-error +a-3

NativeOpt: -warn-error +a-3

MainIs: set_domain_uuid.ml

Install: false

BuildDepends:

xenctrl,

uuidm,

cmdliner,

profiling # <-- here

The Makefile target coverage primes the project for a profiling build:

# make coverage - prepares for building with coverage analysis

coverage: _tags _tags.coverage

test ! -f _tags.orig && mv _tags _tags.orig || true

cat _tags.coverage _tags.orig > _tags

| Design document | |

|---|---|

| Revision | v7 |

| Status | released (7.0) |

| Revision history | |

| v1 | Initial version |

| v2 | Add details about VM migration and import |

| v3 | Included and excluded use cases |

| v4 | Rolling Pool Upgrade use cases |

| v5 | Lots of changes to simplify the design |

| v6 | Use case refresh based on simplified design |

| v7 | RPU refresh based on simplified design |

CPU feature levelling 2.0

Executive Summary

The old XS 5.6-style Heterogeneous Pool feature that is based around hardware-level CPUID masking will be replaced by a safer and more flexible software-based levelling mechanism.

History

- Original XS 5.6 design: heterogeneous-pools

- Changes made in XS 5.6 FP1 for the DR feature (added CPUID checks upon migration)

- XS 6.1: migration checks extended for cross-pool scenario

High-level Interfaces and Behaviour

A VM can only be migrated safely from one host to another if both hosts offer the set of CPU features which the VM expects. If this is not the case, CPU features may appear or disappear as the VM is migrated, causing it to crash. The purpose of feature levelling is to hide features which the hosts do not have in common from the VM, so that it does not see any change in CPU capabilities when it is migrated.

Most pools start off with homogenous hardware, but over time it may become impossible to source new hosts with the same specifications as the ones already in the pool. The main use of feature levelling is to allow such newer, more capable hosts to be added to an existing pool while preserving the ability to migrate existing VMs to any host in the pool.

Principles for Migration

The CPU levelling feature aims to both:

- Make VM migrations safe by ensuring that a VM will see the same CPU features before and after a migration.

- Make VMs as mobile as possible, so that it can be freely migrated around in a XenServer pool.

To make migrations safe:

- A migration request will be blocked if the destination host does not offer the some of the CPU features that the VM currently sees.

- Any additional CPU features that the destination host is able to offer will be hidden from the VM.

Note: Due to the limitations of the old Heterogeneous Pools feature, we are not able to guarantee the safety of VMs that are migrated to a Levelling-v2 host from an older host, during a rolling pool upgrade. This is because such VMs may be using CPU features that were not captured in the old feature sets, of which we are therefore unaware. However, migrations between the same two hosts, but before the upgrade, may have already been unsafe. The promise is that we will not make migrations more unsafe during a rolling pool upgrade.

To make VMs mobile:

- A VM that is started in a XenServer pool will be able to see only CPU features that are common to all hosts in the pool. The set of common CPU features is referred to in this document as the pool CPU feature level, or simply the pool level.

Use Cases for Pools

A user wants to add a new host to an existing XenServer pool. The new host has all the features of the existing hosts, plus extra features which the existing hosts do not. The new host will be allowed to join the pool, but its extra features will be hidden from VMs that are started on the host or migrated to it. The join does not require any host reboots.

A user wants to add a new host to an existing XenServer pool. The new host does not have all the features of the existing ones. XenCenter warns the user that adding the host to the pool is possible, but it would lower the pool’s CPU feature level. The user accepts this and continues the join. The join does not require any host reboots. VMs that are started anywhere on the pool, from now on, will only see the features of the new host (the lowest common denominator), such that they are migratable to any host in the pool, including the new one. VMs that were running before the pool join will not be migratable to the new host, because these VMs may be using features that the new host does not have. However, after a reboot, such VMs will be fully mobile.

A user wants to add a new host to an existing XenServer pool. The new host does not have all the features of the existing ones, and at the same time, it has certain features that the pool does not have (the feature sets overlap). This is essentially a combination of the two use cases above, where the pool’s CPU feature level will be downgraded to the intersection of the feature sets of the pool and the new host. The join does not require any host reboots.

A user wants to upgrade or repair the hardware of a host in an existing XenServer pool. After upgrade the host has all the features it used to have, plus extra features which other hosts in the pool do not have. The extra features are masked out and the host resumes its place in the pool when it is booted up again.

A user wants to upgrade or repair the hardware of a host in an existing XenServer pool. After upgrade the host has fewer features than it used to have. When the host is booted up again, the pool CPU’s feature level will be automatically lowered, and the user will be alerted of this fact (through the usual alerting mechanism).

A user wants to remove a host from an existing XenServer pool. The host will be removed as normal after any VMs on it have been migrated away. The feature set offered by the pool will be automatically re-levelled upwards in case the host which was removed was the least capable in the pool, and additional features common to the remaining hosts will be unmasked.

Rolling Pool Upgrade

A VM which was running on the pool before the upgrade is expected to continue to run afterwards. However, when the VM is migrated to an upgraded host, some of the CPU features it had been using might disappear, either because they are not offered by the host or because the new feature-levelling mechanism hides them. To have the best chance for such a VM to successfully migrate (see the note under “Principles for Migration”), it will be given a temporary VM-level feature set providing all of the destination’s CPU features that were unknown to XenServer before the upgrade. When the VM is rebooted it will inherit the pool-level feature set.

A VM which is started during the upgrade will be given the current pool-level feature set. The pool-level feature set may drop after the VM is started, as more hosts are upgraded and re-join the pool, however the VM is guaranteed to be able to migrate to any host which has already been upgraded. If the VM is started on the master, there is a risk that it may only be able to run on that host.

To allow the VMs with grandfathered-in flags to be migrated around in the pool, the intra pool VM migration pre-checks will compare the VM’s feature flags to the target host’s flags, not the pool flags. This will maximise the chance that a VM can be migrated somewhere in a heterogeneous pool, particularly in the case where only a few hosts in the pool do not have features which the VMs require.

To allow cross-pool migration, including to pool of a higher XenServer version, we will still check the VM’s requirements against the pool-level features of the target pool. This is to avoid the possibility that we migrate a VM to an ‘island’ in the other pool, from which it cannot be migrated any further.

XenAPI Changes

Fields

host.cpu_infois a field of type(string -> string) mapthat contains information about the CPUs in a host. It contains the following keys:cpu_count,socket_count,vendor,speed,modelname,family,model,stepping,flags,features,features_after_reboot,physical_featuresandmaskable.- The following keys are specific to hardware-based CPU masking and will be removed:

features_after_reboot,physical_featuresandmaskable. - The

featureskey will continue to hold the current CPU features that the host is able to use. In practise, these features will be available to Xen itself and dom0; guests may only see a subset. The current format is a string of four 32-bit words represented as four groups of 8 hexadecimal digits, separated by dashes. This will change to an arbitrary number of 32-bit words. Each bit at a particular position (starting from the left) still refers to a distinct CPU feature (1: feature is present;0: feature is absent), and feature strings may be compared between hosts. The old format simply becomes a special (4 word) case of the new format, and bits in the same position may be compared between old and new feature strings. - The new key

features_pvwill be added, representing the subset offeaturesthat the host is able to offer to a PV guest. - The new key

features_hvmwill be added, representing the subset offeaturesthat the host is able to offer to an HVM guest.

- The following keys are specific to hardware-based CPU masking and will be removed:

- A new field

pool.cpu_infoof type(string -> string) map(read only) will be added. It will contain:vendor: The common CPU vendor across all hosts in the pool.features_pv: The intersection offeatures_pvacross all hosts in the pool, representing the feature set that a PV guest will see when started on the pool.features_hvm: The intersection offeatures_hvmacross all hosts in the pool, representing the feature set that an HVM guest will see when started on the pool.cpu_count: the total number of CPU cores in the pool.socket_count: the total number of CPU sockets in the pool.

- The

pool.other_config:cpuid_feature_maskoverride key will no longer have any effect on pool join or VM migration. - The field

VM.last_boot_CPU_flagswill be updated to the new format (seehost.cpu_info:features). It will still contain the feature set that the VM was started with as well as the vendor (under thefeaturesandvendorkeys respectively).

Messages

pool.joincurrently requires that the CPU vendor and feature set (according tohost.cpu_info:vendorandhost.cpu_info:features) of the joining host are equal to those of the pool master. This requirement will be loosened to mandate only equality in CPU vendor:- The join will be allowed if

host.cpu_info:vendorequalspool.cpu_info:vendor. - This means that xapi will additionally allow hosts that have a more extensive feature set than the pool (as long as the CPU vendor is common). Such hosts are transparently down-levelled to the pool level (without needing reboots).

- This further means that xapi will additionally allow hosts that have a less extensive feature set than the pool (as long as the CPU vendor is common). In this case, the pool is transparently down-levelled to the new host’s level (without needing reboots). Note that this does not affect any running VMs in any way; the mobility of running VMs will not be restricted, which can still migrate to any host they could migrate to before. It does mean that those running VMs will not be migratable to the new host.

- The current error raised in case of a CPU mismatch is

POOL_HOSTS_NOT_HOMOGENEOUSwithreasonargument"CPUs differ". This will remain the error that is raised if the pool join fails due to incompatible CPU vendors. - The

pool.other_config:cpuid_feature_maskoverride key will no longer have any effect.

- The join will be allowed if

host.set_cpu_featuresandhost.reset_cpu_featureswill be removed: it is no longer to use the old method of CPU feature masking (CPU feature sets are controlled automatically by xapi). Calls will fail withMESSAGE_REMOVED.- VM lifecycle operations will be updated internally to use the new feature fields, to ensure that:

- Newly started VMs will be given CPU features according to the pool level for maximal mobility.

- For safety, running VMs will maintain their feature set across migrations and suspend/resume cycles. CPU features will transparently be hidden from VMs.

- Furthermore, migrate and resume will only be allowed in case the target host’s CPUs are capable enough, i.e.

host.cpu_info:vendor=VM.last_boot_CPU_flags:vendorandhost.cpu_info:features_{pv,hvm}⊇VM.last_boot_CPU_flags:features. AVM_INCOMPATIBLE_WITH_THIS_HOSTerror will be returned otherwise (as happens today). - For cross pool migrations, to ensure maximal mobility in the target pool, a stricter condition will apply: the VM must satisfy the pool CPU level rather than just the target host’s level:

pool.cpu_info:vendor=VM.last_boot_CPU_flags:vendorandpool.cpu_info:features_{pv,hvm}⊇VM.last_boot_CPU_flags:features

CLI Changes

The following changes to the xe CLI will be made:

xe host-cpu-info(as well asxe host-param-listand friends) will return the fields ofhost.cpu_infoas described above.xe host-set-cpu-featuresandxe host-reset-cpu-featureswill be removed.xe host-get-cpu-featureswill still return the value ofhost.cpu_info:featuresfor a given host.

Low-level implementation

Xenctrl

The old xc_get_boot_cpufeatures hypercall will be removed, and replaced by two new functions, which are available to xenopsd through the Xenctrl module:

external get_levelling_caps : handle -> int64 = "stub_xc_get_levelling_caps"

type featureset_index = Featureset_host | Featureset_pv | Featureset_hvm

external get_featureset : handle -> featureset_index -> int64 array = "stub_xc_get_featureset"

In particular, the get_featureset function will be used by xapi/xenopsd to ask Xen which are the widest sets of CPU features that it can offer to a VM (PV or HVM). I don’t think there is a use for get_levelling_caps yet.

Xenopsd

- Update the type

Host.cpu_info, which contains all the fields that need to go into thehost.cpu_infofield in the xapi DB. The type already exists but is unused. Add the functionHOST.get_cpu_infoto obtain an instance of the type. Some code from xapi and the cpuid.ml from xen-api-libs can be reused. - Add a platform key

featureset(Vm.t.platformdata), which xenopsd will write to xenstore along with the other platform keys (no code change needed in xenopsd). Xenguest will pick this up when a domain is created, and will apply the CPUID policy to the domain. This has the effect of masking out features that the host may have, but which have a0in the feature set bitmap. - Review current cpuid-related functions in

xc/domain.ml.

Xapi

Xapi startup

- Update

Create_misc.create_host_cpufunction to use the new xenopsd call. - If the host features fall below pool level, e.g. due to a change in hardware: down-level the pool by updating

pool.cpu_info.features_{pv,hvm}. Newly started VMs will inherit the new level; already running VMs will not be affected, but will not be able to migrate to this host. - To notify the admin of this event, an API alert (message) will be set:

pool_cpu_features_downgraded.

VM start

- Inherit feature set from pool (

pool.cpu_info.features_{pv,hvm}) and setVM.last_boot_CPU_flags(cpuid_helpers.ml). - The domain will be started with this CPU feature set enabled, by writing the feature set string to

platformdata(see above).

VM migrate and resume

- There are already CPU compatiblity checks on migration, both in-pool and cross-pool, as well as resume. Xapi compares

VM.last_boot_CPU_flagsof the VM to-migrate withhost.cpu_infoof the receiving host. Migration is only allowed if the CPU vendors and the same, andhost.cpu_info:features⊇VM.last_boot_CPU_flags:features. The check can be overridden by setting theforceargument totrue. - For in-pool migrations, these checks will be updated to use the appropriate

features_pvorfeatures_hvmfield. - For cross-pool migrations. These checks will be updated to use

pool.cpu_info(features_pvorfeatures_hvmdepending on how the VM was booted) rather thanhost.cpu_info. - If the above checks pass, then the

VM.last_boot_CPU_flagswill be maintained, and the new domain will be started with the same CPU feature set enabled, by writing the feature set string toplatformdata(see above). - In case the VM is migrated to a host with a higher xapi software version (e.g. a migration from a host that does not have CPU levelling v2), the feature string may be longer. This may happen during a rolling pool upgrade or a cross-pool migration, or when a suspended VM is resume after an upgrade. In this case, the following safety rules apply:

- Only the existing (shorter) feature string will be used to determine whether the migration will be allowed. This is the best we can do, because we are unaware of the state of the extended feature set on the older host.

- The existing feature set in

VM.last_boot_CPU_flagswill be extended with the extra bits inhost.cpu_info:features_{pv,hvm}, i.e. the widest feature set that can possibly be granted to the VM (just in case the VM was using any of these features before the migration). - Strictly speaking, a migration of a VM from host A to B that was allowed before B was upgraded, may no longer be allowed after the upgrade, due to stricter feature sets in the new implementation (from the

xc_get_featuresethypercall). However, the CPU features that are switched off by the new implementation are features that a VM would not have been able to actually use. We therefore need a don’t-care feature set (similar to the oldpool.other_config:cpuid_feature_maskkey) with bits that we may ignore in migration checks, and switch off after the migration. This will be a xapi config file option. - XXX: Can we actually block a cross-pool migration at the receiver end??

VM import

The VM.last_boot_CPU_flags field must be upgraded to the new format (only really needed for VMs that were suspended while exported; preserve_power_state=true), as described above.

Pool join

Update pool join checks according to the rules above (see pool.join), i.e. remove the CPU features constraints.

Upgrade

- The pool level (

pool.cpu_info) will be initialised when the pool master upgrades, and automatically adjusted if needed (downwards) when slaves are upgraded, by each upgraded host’s started sequence (as above under “Xapi startup”). - The

VM.last_boot_CPU_flagsfields of running and suspended VMs will be “upgraded” to the new format on demand, when a VM is migrated to or resume on an upgraded host, as described above.

XenCenter integration

- Don’t explicitly down-level upon join anymore

- Become aware of new pool join rule

- Update Rolling Pool Upgrade

| Design document | |

|---|---|

| Revision | v1 |

| Status | proposed |

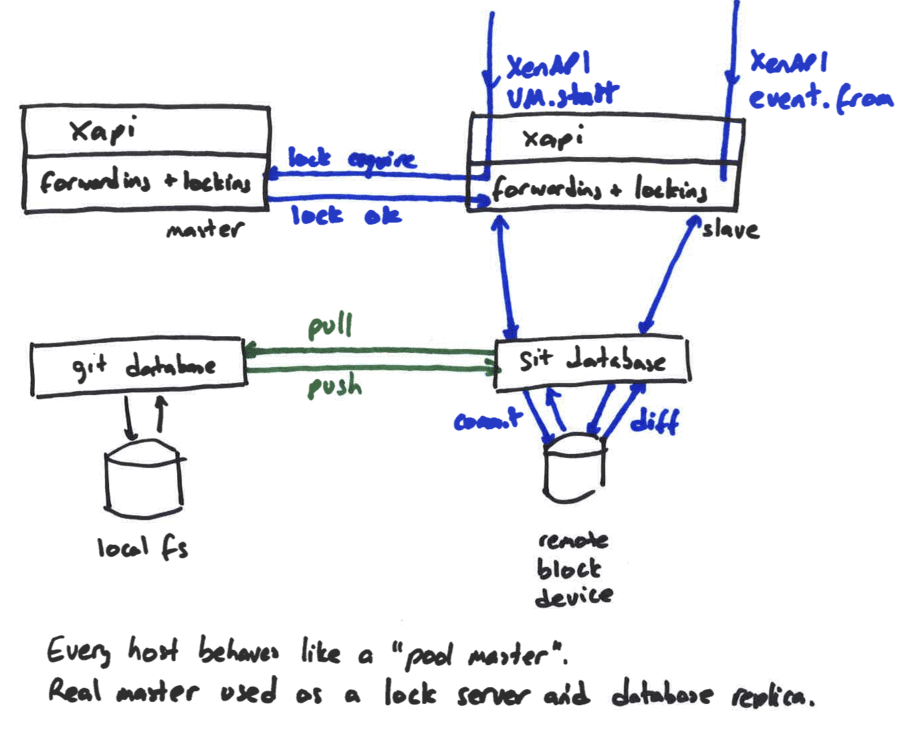

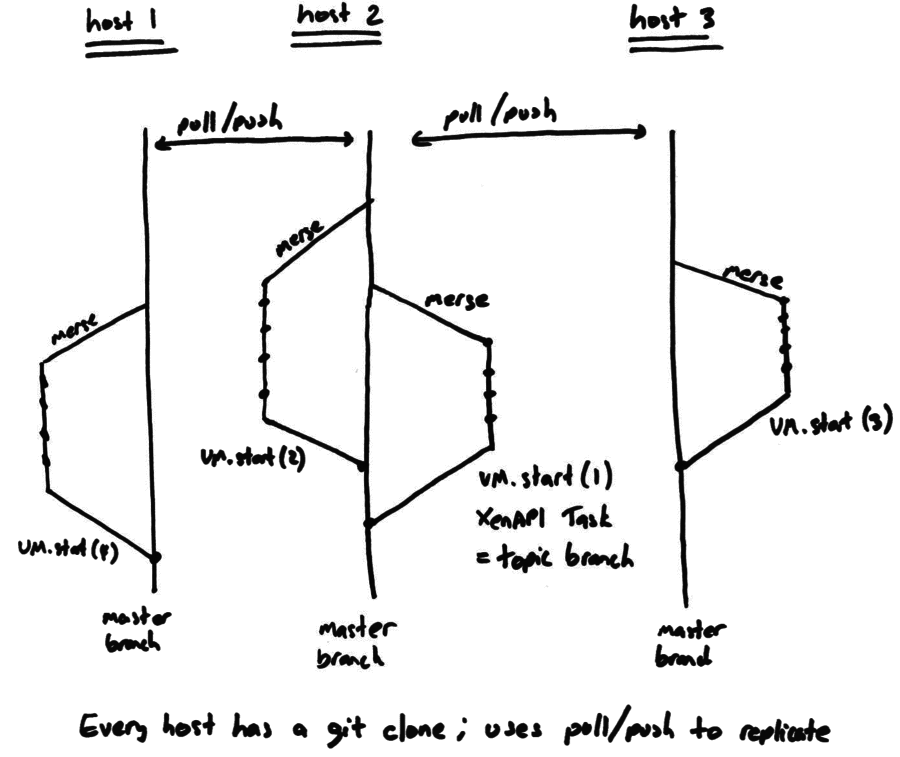

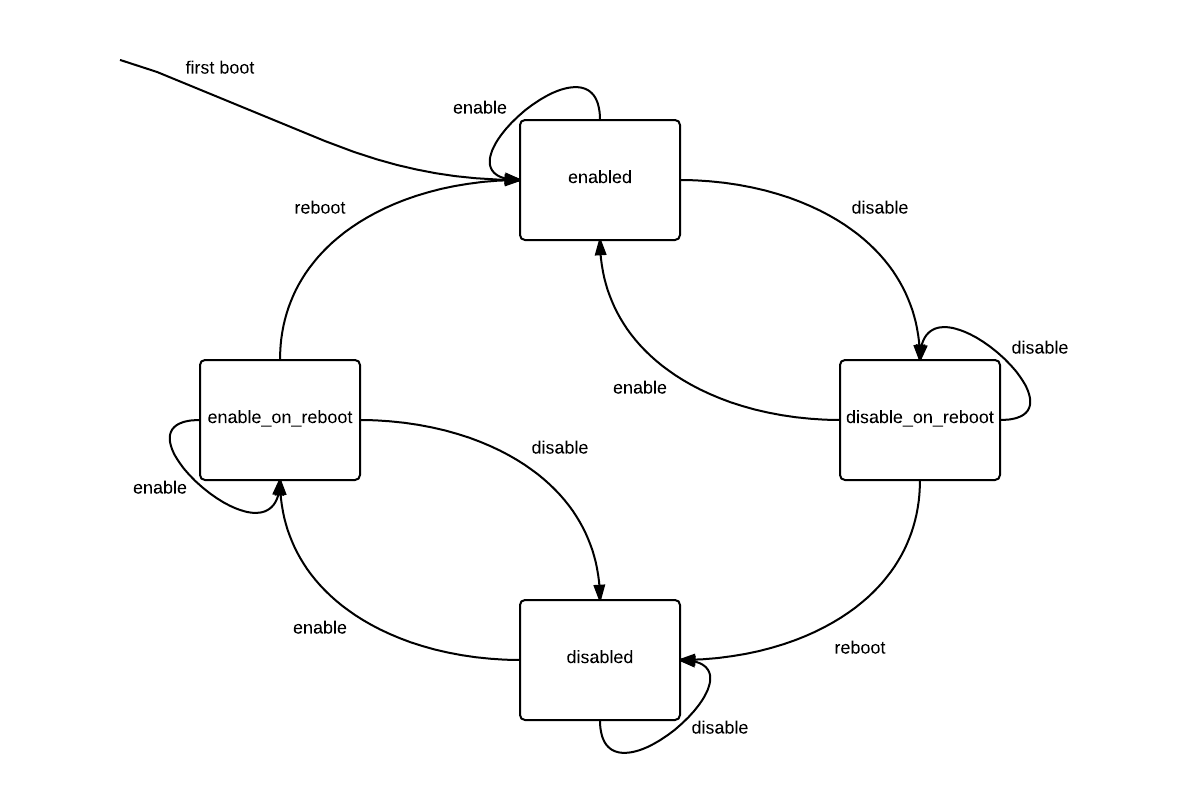

Distributed database

All hosts in a pool use the shared database by sending queries to the pool master. This creates

- a performance bottleneck as the pool size increases

- a reliability problem when the master fails.

The reliability problem can be ameliorated by running with HA enabled, but this is not always possible.

Both problems can be addressed by observing that the database objects correspond to distinct physical objects where eventual consistency is perfectly ok. For example if host ‘A’ is running a VM and changes the VM’s name, it doesn’t matter if it takes a while before the change shows up on host ‘B’. If host ‘B’ changes its network configuration then it doesn’t matter how long it takes host ‘A’ to notice. We would still like the metadata to be replicated to cope with failure, but we can allow changes to be committed locally and synchronised later.